python结巴分词的封装类

python结巴分词的封装类, 结巴分词在python中的应用,可以找出一篇文章中的关键词,后面可以进行文章的信息总结使用。结巴分词还可以帮助我们对中文领域的深入的研究,为开发者提供了便利

'''

Filename :JiebafenciHelper

Description :结巴分词帮助类

Time :2021-12-23 11:24:36

Author :www.hao366.net

Version :1.0

'''

import jieba

import jieba.analyse

import time

from sumy.parsers.plaintext import PlaintextParser

from sumy.nlp.tokenizers import Tokenizer

from sumy.summarizers.lsa import LsaSummarizer

import re

import os

import os.path

currentdir =os.path.join(os.path.dirname(os.path.abspath(__file__)))

class JieBafenci(object):

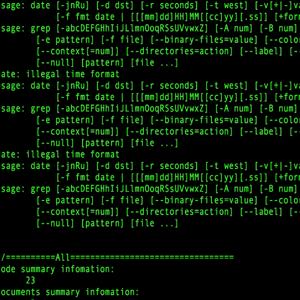

# Description:提取高频词,用于搜集关键字, topk 是提取几个

def GetGaoPinCi(self, content,topk):

# jieba.load_userdict(os.path.join(currentdir,'jiebaciku.txt'))

content = re.sub('</?.*?>',',',content)

content=content[0:3000]

seg = jieba.cut(content, cut_all=False)

output = ' '.join(seg)

"""

几个参数解释:

* text : 待提取的字符串类型文本

* topK : 返回TF-IDF权重最大的关键词的个数,默认为20个

* withWeight : 是否返回关键词的权重值,默认为False

* allowPOS : 包含指定词性的词,默认为空

"""

try:

keywords = jieba.analyse.extract_tags(

output, topK=topk, withWeight=True, allowPOS=())

except:

pass

else:

return keywords

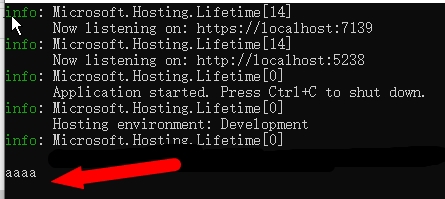

#Description:提取摘要, text 从text中提取文本,num 提取几段,每段大概100左右

def GetDescription(self,text,num):

text = re.sub('</?.*?>',',',text)

# 如果文字太长时,截取

text=text[0:3000]

# 读取文本文件并创建解析器

parser = PlaintextParser.from_string(text, Tokenizer("chinese"))

# 创建摘要生成器

summarizer = LsaSummarizer()

# 提取3句摘要

summary = summarizer(parser.document, num)

# 打印摘要

result=''

for sentence in summary:

result+=str(sentence)+','

result=result.strip(',')

return result

if __name__ == "__main__":

jb = JieBafenci()

fc = '''

京东商城有假货吗?如辨别是真货还是假货?

'''

result = jb.GetGaoPinCi(fc,2)

result2=jb.GetDescription(fc,3)